Achieving 1.85x higher performance for deep learning based object detection with an AWS Neuron compiled YOLOv4 model on AWS Inferentia | AWS Machine Learning Blog

GitHub - lrakai/aws-ml-cpu-v-gpu: A lab to compare CPU to GPU performance using the AWS Deep Learning AMI and p2.xlarge instance type

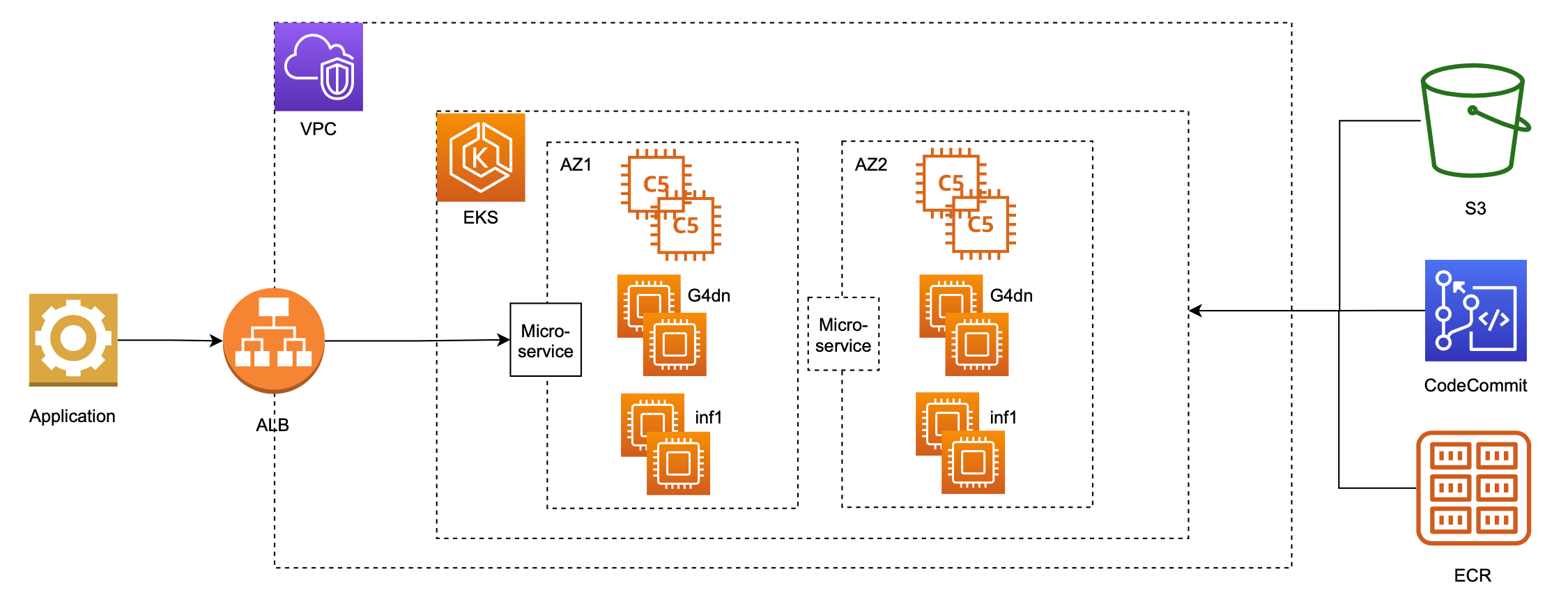

Serve 3,000 deep learning models on Amazon EKS with AWS Inferentia for under $50 an hour | AWS Machine Learning Blog

Reducing deep learning inference cost with MXNet and Amazon Elastic Inference | AWS Machine Learning Blog

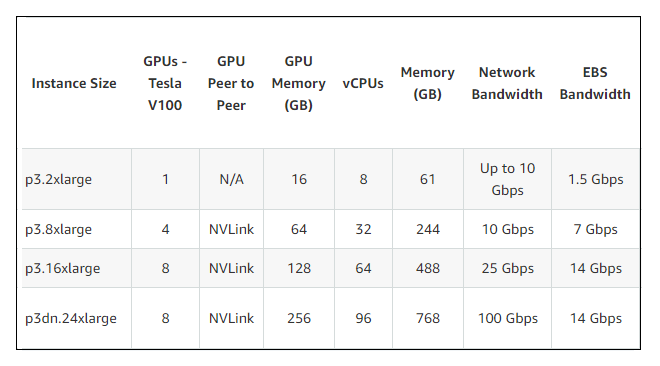

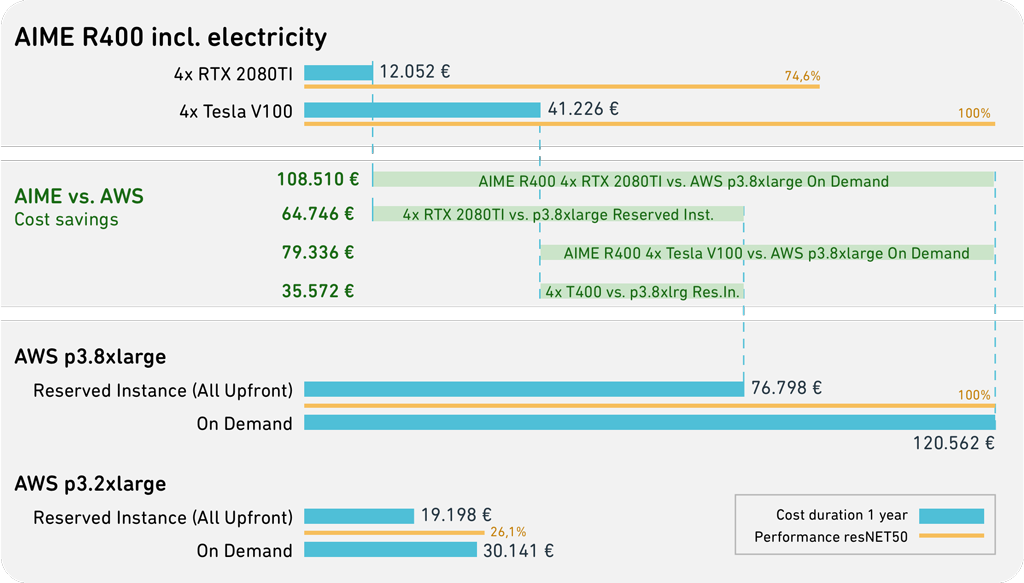

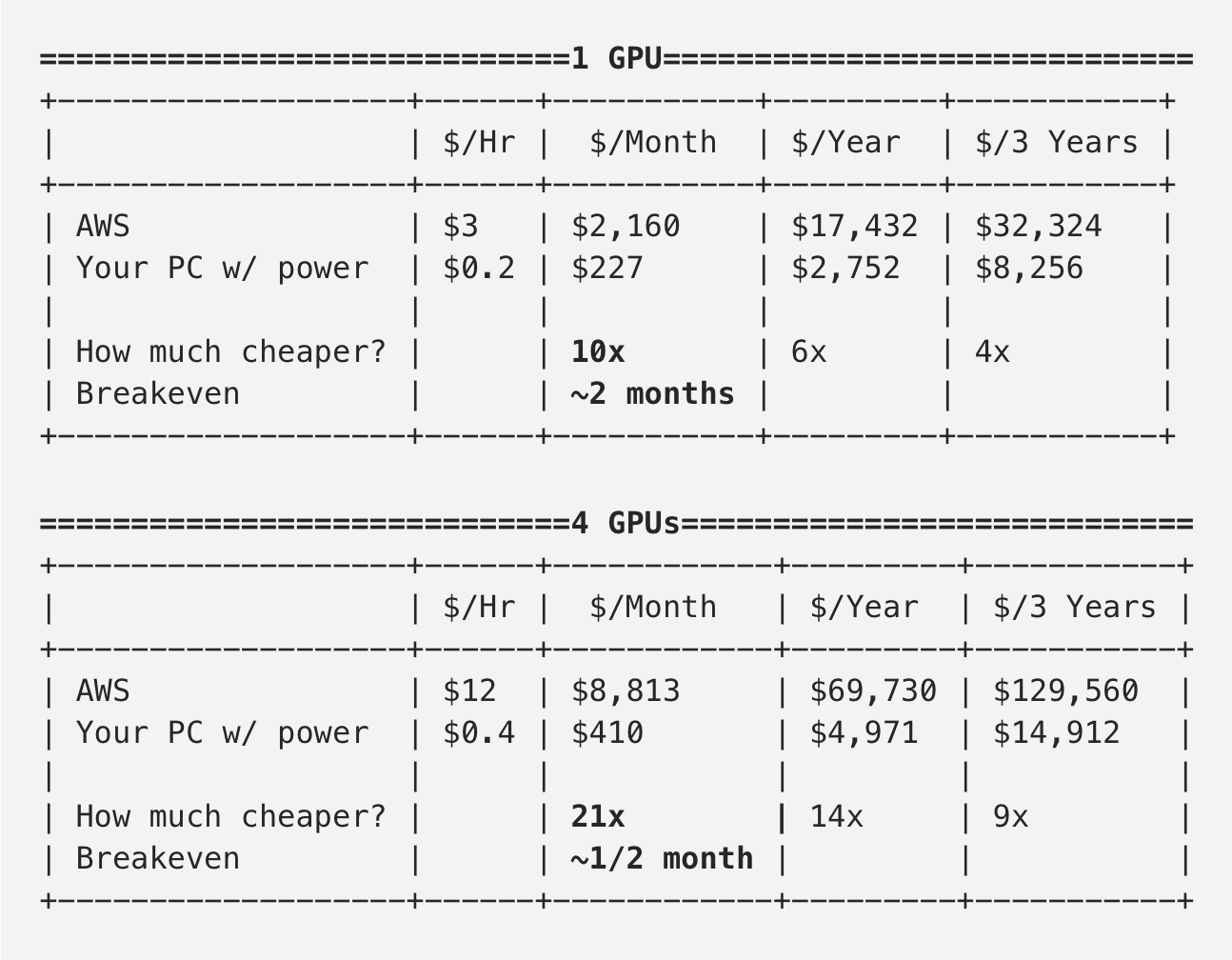

CLOUD VS. ON-PREMISE - Total Cost of Ownership Analysis | Deep Learning Workstations, Servers, GPU-Cloud Services | AIME

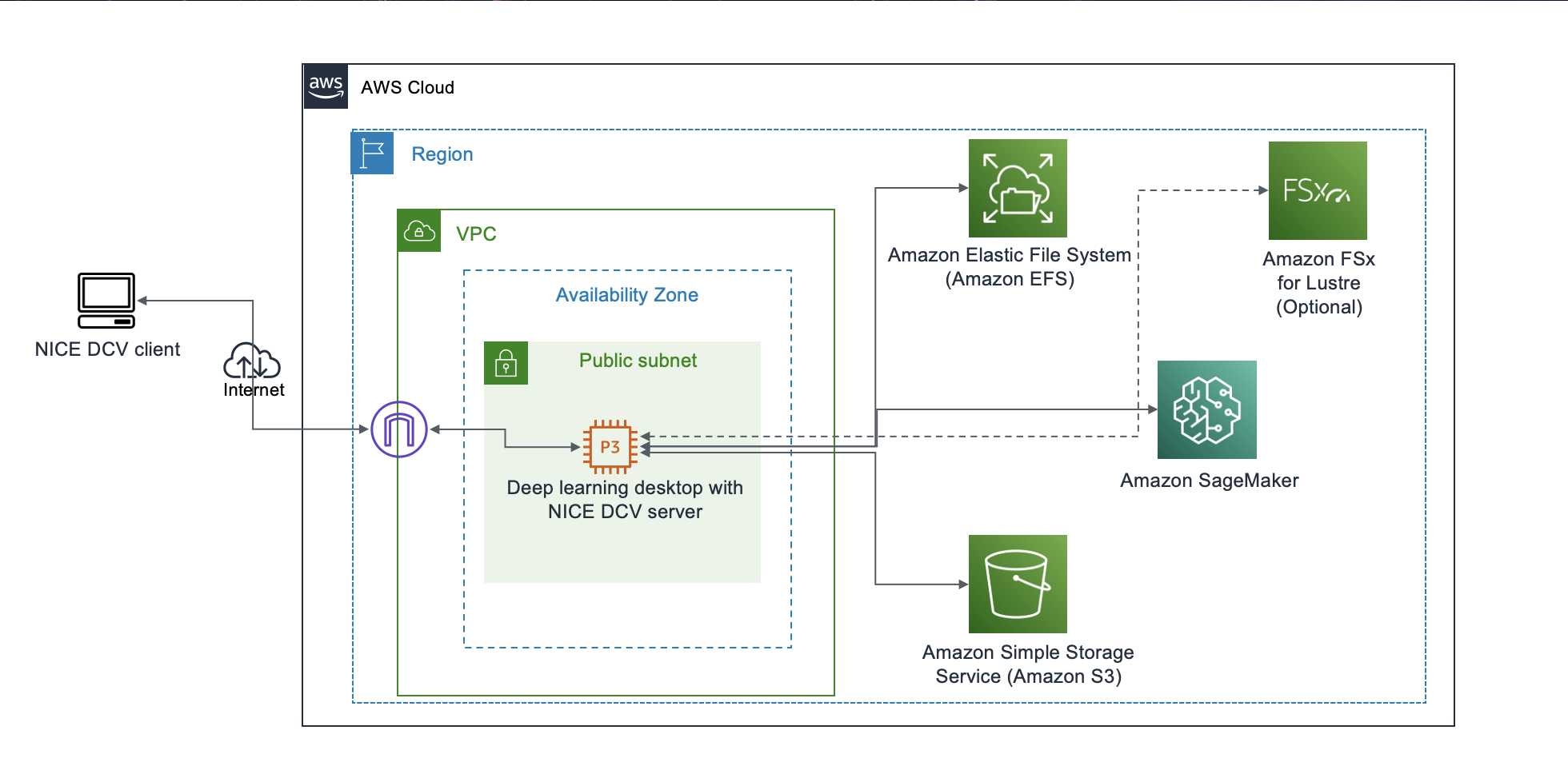

Field Notes: Launch a Fully Configured AWS Deep Learning Desktop with NICE DCV | AWS Architecture Blog

Optimizing I/O for GPU performance tuning of deep learning training in Amazon SageMaker | AWS Machine Learning Blog

/filters:no_upscale()/news/2021/11/ec2-g5-instances-nvidia/en/resources/1G5%20Instances%20AWS-1637244045012.png)